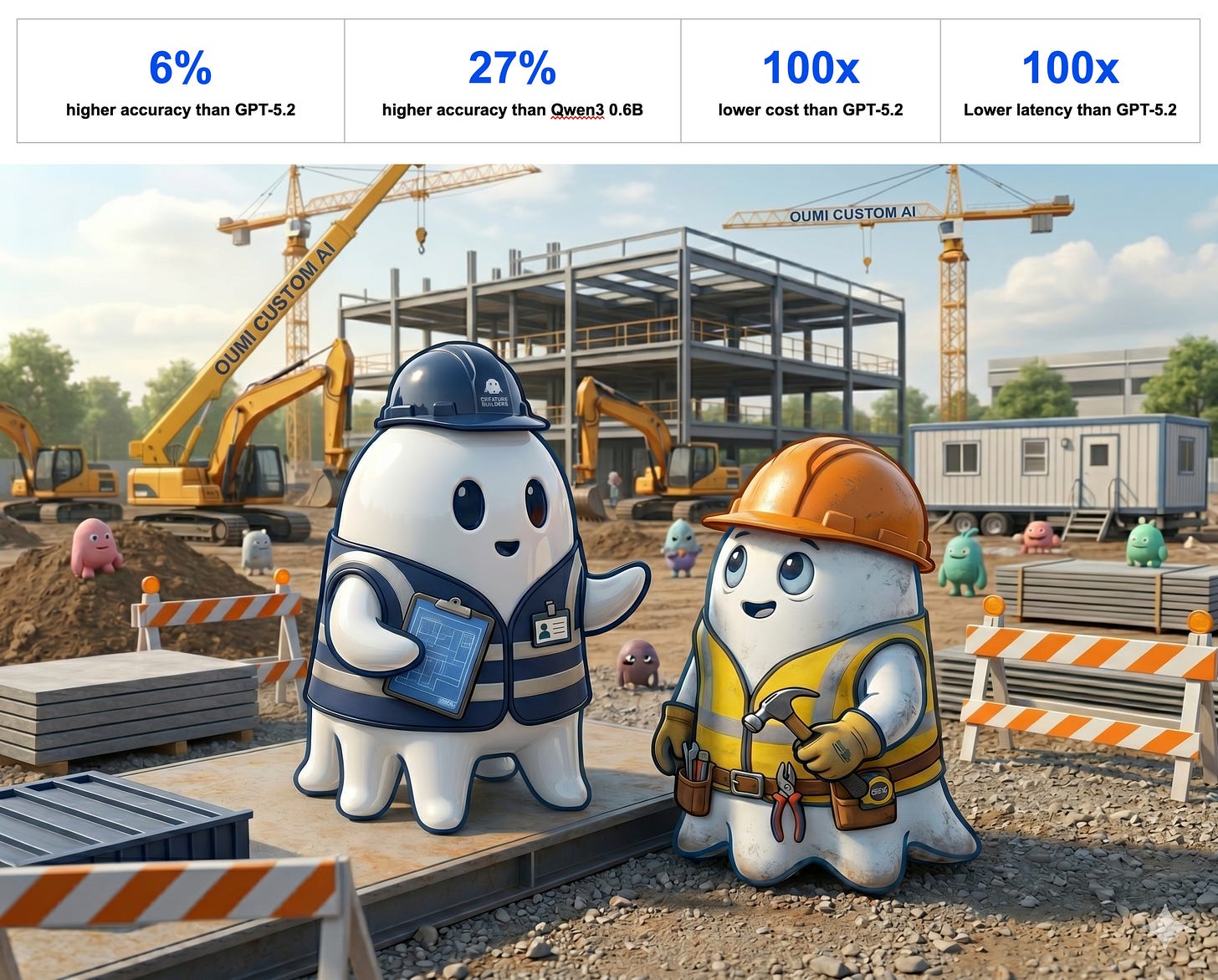

Case Study: DMG achieves 6% higher quality and 100x lower costs for invoice validation

With Oumi’s technology, DMG’s custom AI beat GPT5.2 by 6% accuracy and 6% validity at 100x lower cost

“Oumi’s synthesis recipes took us from schema to 500 training samples in just a few iterations. Controlling data distribution was simple, and evolving from basic to complex queries required only small config changes. The declarative, version-controlled approach enabled rapid iteration and a production-ready model, without manual data creation.” — Ioanna Sanida, Data Science Team Lead

Problem

Divisions Maintenance Group (DMG) coordinates facility maintenance across thousands of properties, managing a shared pool of contractors — plumbers, electricians, handymen—who submit invoices for reimbursement after completing jobs. Each invoice must be validated on two dimensions: validity (correct formatting and categorization) and appropriateness (reasonable charges given the job scope, profession, and pricing norms). At scale, manual review was labor-intensive, slow, and expensive, and DMG’s existing automated approaches were underperforming—validity accuracy sat at just 72%, with no reliable baseline for appropriateness at all.

Solution

DMG partnered with Oumi to fine-tune an ultra-small language model (Qwen3 0.6) purpose-built for invoice classification. The small footprint was critical: DMG’s volume demands and interest in edge deployment ruled out large proprietary models for production use.

The core challenge was data. DMG provided ~10k unlabeled invoice examples covering only plumbing, but the model needed to generalize across plumbing, electrical, and handyman domains. Oumi built a synthesis recipe to generate labeled training data, including entirely new examples for the missing domains. Random real invoice samples were leveraged as few-shot formatting examples within each synthesis prompt to increase sample diversity.

Outcome

To benchmark against a frontier model, Oumi launched a job to fine-tune both the fine-tuned model and GPT-5.2 on real unlabeled data. Agreement rates were 93% on validity and 85% on appropriateness—without any GPT-5-generated data in training.

The fine-tuning the 0.6B model delivered dramatic improvements across both tasks over the pre-fine-tuned model:

Validity accuracy: 72% → 99%

Appropriateness accuracy: 52% → 91%

meaning that our fine-tuned model beat GPT5.2 by 6% on both metrics. For context, KimiK2 (a much larger model) achieved only marginally higher agreement at 95% and 87%. A sub-1B fine-tuned model effectively matched frontier-scale performance on this task, enabling DMG to move toward fully automated invoice processing with high reliability.